AI Evals

Overview

This AI product management course focuses on evaluating AI systems using structured evaluation frameworks, metrics, and testing pipelines. It covers how to define quality, measure performance, and build evaluation suites that capture real-world failure modes. The material applies in real product work such as validating models before launch, setting deployment gates, and monitoring production systems for drift, bias, and reliability issues. It also addresses tradeoffs between automated and human evaluation, and how to iterate on models based on measurable outcomes. Best suited for PMs, ML product managers, and teams responsible for quality and validation of AI systems in production.

Instructors

Related Courses

These recommendations prioritize the same primary tag first, then broader tag overlap, then shared category context.

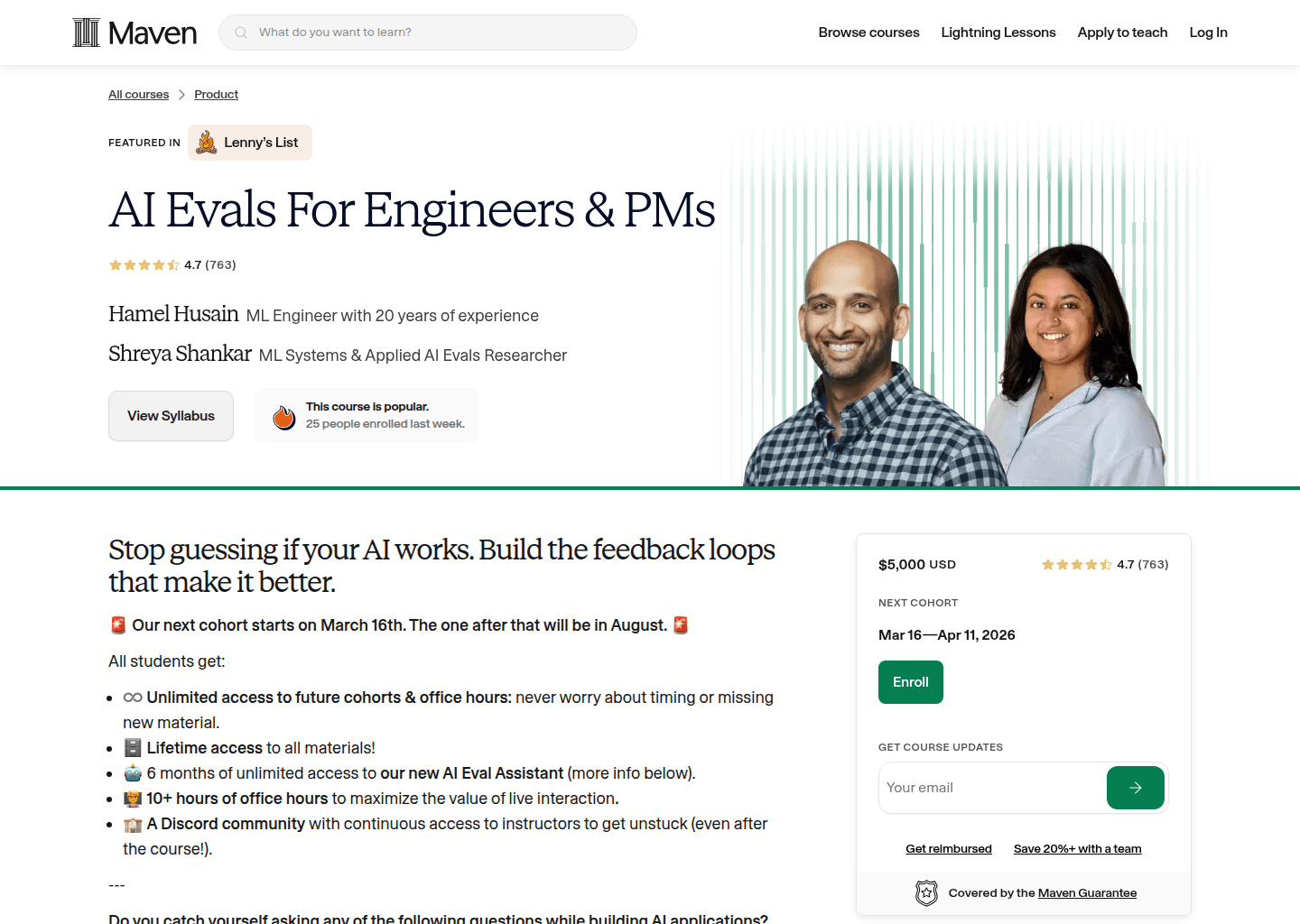

AI Evals For Engineers & PMs

Eliminate guesswork in AI products by learning how to design, run, and trust rigorous evaluations.

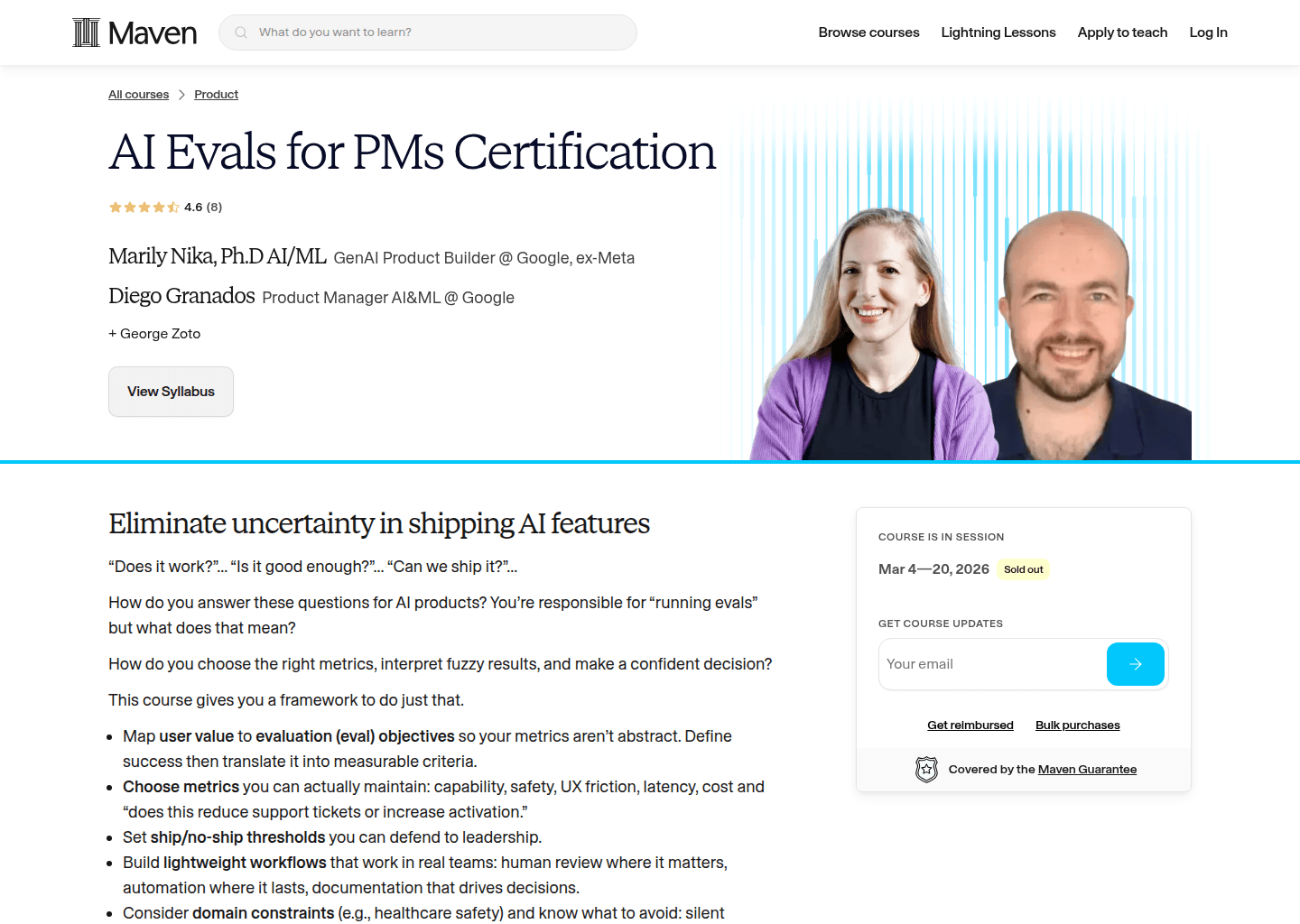

AI Evals for PMs Certification

Turn fuzzy AI quality questions into concrete evals, metrics, and ship decisions that leadership will trust.

Prompt Engineering

Preview pending

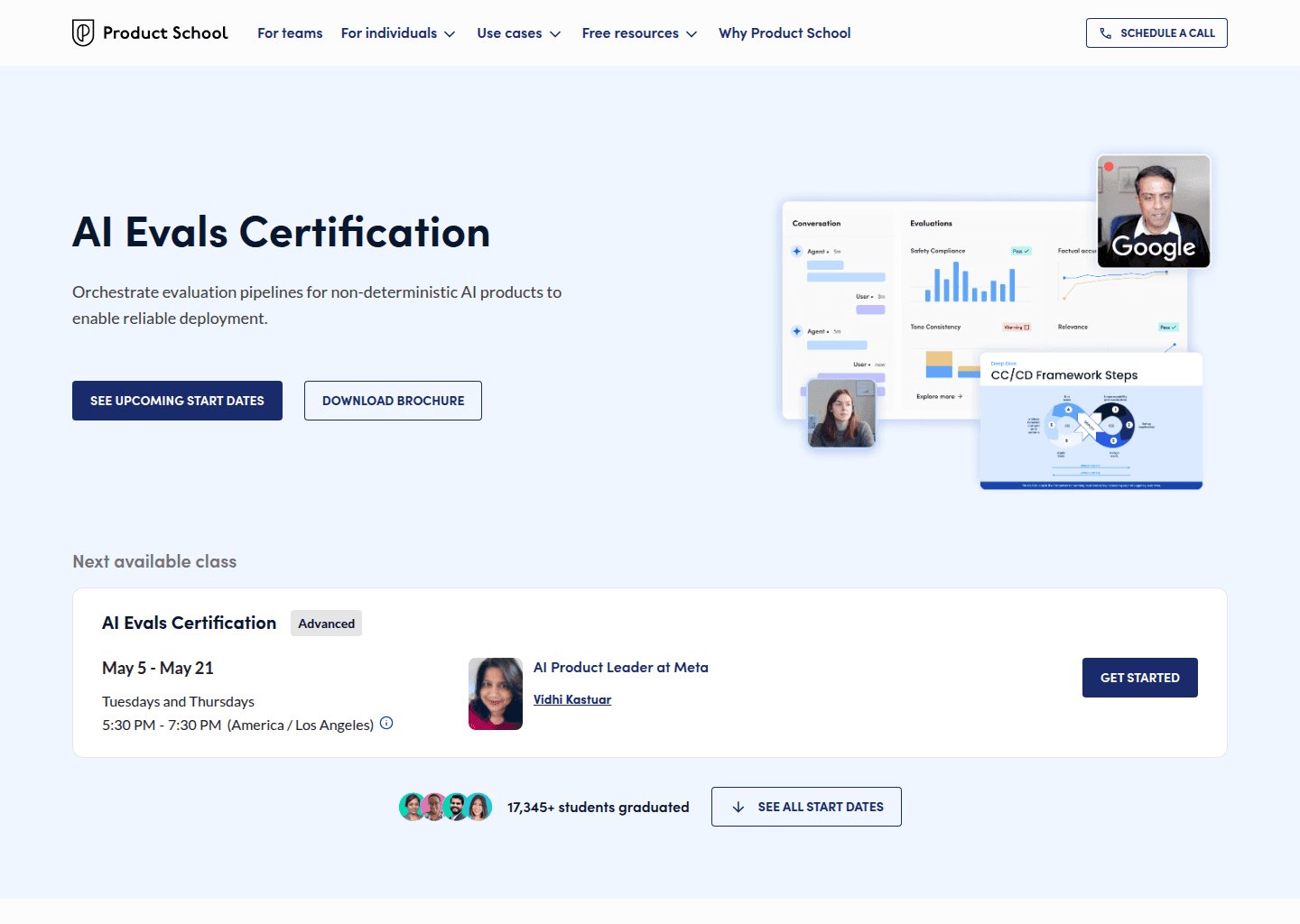

AI Evals for Product Development

Live site screenshot will appear here once the course page has been captured.

AI Evals for Product Development

Preview pending

AI Evaluations for Product Managers

Live site screenshot will appear here once the course page has been captured.

AI Evaluations for Product Managers

Preview pending

Automate AI Evals with Claude Code

Live site screenshot will appear here once the course page has been captured.